How Language Models Remember Too Much?

Explore data memorization in LLMs and what it means for personal privacy, examining how models can leak training data and the implications for user security.

Have you ever had a long conversation with an AI chatbot and then wondered whether the information you shared might still be stored in the system’s memory? Perhaps you even gave a command like “forget my data” just to be safe. Well, that might not be enough…

"OmniGPT, a widely used AI chatbot aggregator that connects users to multiple LLMs, suffered a major breach, exposing over 34 million user messages and thousands of API keys to the public." (Elizabeth Jordan, 2025)

AI models especially large language models (LLMs) are trained on millions of texts, giving them incredibly powerful predictive and generative capabilities. However, with this power comes a significant risk: remembering too much. If personal data that hasn’t been properly anonymized makes its way into the training data, it can occasionally be recalled in surprising and concerning ways. While users unknowingly contribute to these data pools, they may also be handing over private information, digital footprints, and personal details to the very systems they trust.

In this article, we’ll explore how LLMs struggle or even fail to “forget,” what kinds of privacy risks this poses for individuals, and how current legal frameworks are (or aren’t) addressing this new reality. We’ll also examine the technical and ethical pathways toward building safer AI systems. Because in the digital age, not being forgotten may sometimes be the most dangerous privilege of all.

What Is Data Memorization in Language Models?

“Memorization is not rare; it is a fundamental property of these models” (N. Carlini, 2021).

Data memorization refers to the phenomenon where a language model, during its training phase, inadvertently encodes specific pieces of information, often rare, sensitive, or personally identifiable data into its internal parameters. Unlike general pattern learning, which enables the model to generate responses based on statistical correlations across large datasets, memorization involves the retention of exact sequences or factual data points that were part of the training corpus.

This is particularly concerning when such information can be reproduced verbatim in response to specific prompts, a vulnerability that poses substantial risks to data privacy, confidentiality, and compliance with regulations such as the General Data Protection Regulation (GDPR). In the context of large-scale models trained on web-scraped datasets, such memorization may occur even when the data was originally assumed to be anonymized, due to the model’s surprising ability to reconstruct identities from seemingly unidentifiable fragments.

N. Carlini (2021) demonstrated that LLMs are capable of memorizing and regurgitating sensitive information from their training data verbatim. In their empirical study, the researchers extracted hundreds of memorized sequences from a language model, including valid email addresses, phone numbers, and even credit card numbers.

Understanding how and why language models memorize data is crucial not only for evaluating their safety and trustworthiness but also for informing the development of technical safeguards (such as differential privacy and red-teaming) and legal mechanisms (like data deletion rights and model auditing). Without such measures, users remain vulnerable to the unintended consequences of interacting with systems that may “remember” more than they should.

Source of the Problem: The Memory Power of Artificial Intelligence

Unintentional Inclusion of Personal Data in Training

LLMs are trained on massive datasets collected from the internet. However, these data pools often contain personal information unintentionally. Sensitive data such as names, addresses, and email addresses from sources like forum posts, social media content, and news articles cannot always be fully separated by automated filtering systems. Moreover, even data believed to be anonymized can be re-identified using modern techniques that combine different data fragments. For example, a few details such as your city of residence, date of birth, and profession can be combined by the model to identify you.

This situation can cause the model to memorize certain personal data, which may be unintentionally disclosed through specific trigger commands. Therefore, the unintentional inclusion of personal data into the model poses serious ethical and legal risks.

The Memorization Threat of AI Systems

Language models are often thought to “learn patterns” just like humans, but sometimes this learning process works in a much more precise way than expected. This is because those models are actually trained to predict the next token with high accuracy, which can lead them to memorize rather than generalize from their training data. The model can encode rare information so tightly that it doesn’t appear in normal conversations; however, certain trigger commands can bring it out. Cybersecurity experts call this technique “prompt injection,” which is like forcibly opening the model’s hidden drawers.

In a 2023 study, it was shown that through this method, language models could partially reveal credit card numbers and identity information they had seen during training. In other words, the model can unknowingly cause “private data leakage.” The danger of this situation affects not only users but also the companies developing the systems; the same method can be used to extract internal communications, trade secrets, or critical information about the model’s training data.

The OWASP LLM02:2025 Sensitive Information Disclosure standard classifies such risks into three main categories:

PII Leakage (Personally Identifiable Information) : Exposure of sensitive personal details such as names, addresses, or government IDs.

Proprietary Algorithm Exposure : Unintended disclosure of confidential source code, model weights, or proprietary techniques.

Sensitive Business Data Disclosure : Leaks of trade secrets, strategic plans, or undisclosed corporate information.

Prevention and Mitigation Strategies outlined in this standard emphasize regular model audits, rigorous dataset sanitization before training, the application of differential privacy, and controlled access to model outputs. Additionally, implementing strong red-teaming processes and restricting prompt patterns known to trigger sensitive disclosures can significantly reduce the likelihood of such incidents.

Lack of Awareness and Digital Footprint in User Interactions with AI

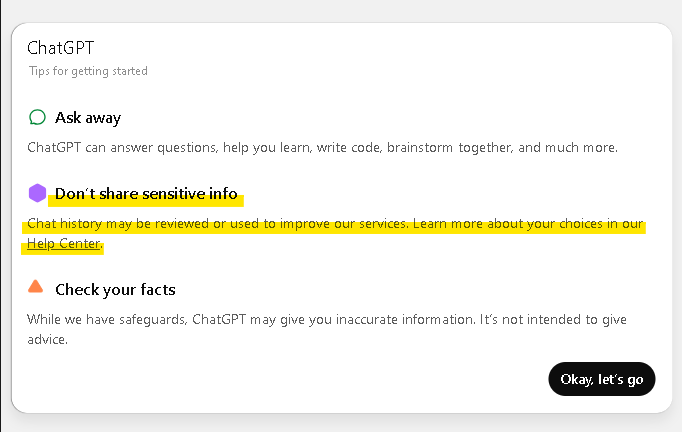

Most users assume that conversations with AI systems are temporary and that the information they share is deleted. In reality, a significant portion of these interactions is stored and analyzed for the purpose of improving and developing the systems. Moreover, these data collection processes are often hidden within long and complex privacy policies; users accept these without reading by clicking “I agree,” thereby allowing their data to be stored and sometimes shared with third parties. As a result, it is often impossible to realize that a simple conversation leaves a much deeper and more permanent “digital footprint.”

Additionally, in a 2024 survey, 62% of users believed that AI platforms do not store their data, whereas in reality most platforms use this data for various purposes such as model development, analytics, and marketing. The majority of users are unaware of these data processing practices, leading to trust being built on misinformation. Every sentence written, every question asked, and every file shared actually contributes to the data pool of AIs meaning users unknowingly become part of a much larger data network.

The Inapplicability of the “Right to be Forgotten”

You may have heard of the “right to be forgotten” for online content; legally, you can request the deletion of your personal data. But what if this data has been processed into an AI model? This is where the real problem begins. Once a model has been trained, erasing specific pieces of information inside it is as impossible as trying to erase only certain letters with a giant sponge.

Therefore, although laws such as KVKK or GDPR theoretically grant the right to be forgotten, in practice it is almost impossible to enforce this right in language models. Moreover, information is not only stored in the model’s parameters; it can also remain in backup training data held by developers or in additional datasets used during “fine-tuning.” This means that even if you believe your data has been deleted, it can continue to live on in different versions.

Possible Solutions

Starting with a Clean Slate for Training

Before model training, personal data can be detected and removed using tools such as regex and Named Entity Recognition (NER). In 2023, OpenAI announced that it used special NER models to detect accidentally included social security numbers in training sets. Additionally, Differential Privacy can be applied to statistically hide each user’s contribution; with Federated Learning, data can be processed locally on devices without being sent to a central server. Google’s Gboard keyboard uses this method to learn from user typing without sending the data to its servers. Apple’s “on-device Siri” update also processes voice commands on the device without sending them to the cloud, providing similar security. However, the 2019 voice assistant scandal showed that these systems can still be vulnerable to data breaches if left unchecked. Therefore, technical solutions must always be supported by third-party audits and independent reports.

Transparency, Legal Compliance, and Accountability

Using an “opt-in” approach, where data is collected only with explicit user consent, increases trust. Platforms like Signal have strengthened user loyalty by fully sharing their data collection and processing policies. Similarly, Microsoft publishes annual transparency reports for its Copilot products.

From a legal perspective, adapting GDPR and KVKK to LLMs and implementing laws like the EU’s AI Act — which requires independent model audits — is crucial. The data breach experienced by Meta in 2022 could not be resolved for months due to different legal processes in different countries, proving the importance of global compliance.

The Knot of the Future: Trust, Ethics, and Shared Responsibility

It is possible to develop ethical and trustworthy AI systems where data is secure; however, this goal gains meaning only when supported not just by technological advances, but also by ethical, legal, and social responsibility awareness. Protecting privacy is not only a matter of code lines but also of the decision-making processes of developers, the regulations of lawmakers, and the conscious choices of users.

Building a safe and fair AI ecosystem is not the duty of just one group; it is a shared responsibility of all actors — from users to developers, from lawmakers to platform providers. As technology advances rapidly, this collaboration will both pave the way for innovation and help rebuild trust in the digital world.

While artificial intelligence systems offer powerful capabilities, they also introduce complex ethical and legal dilemmas. The unintended memorization of personal data by LLMs, the digital footprints users leave behind without realizing it, and the practical inapplicability of the “right to be forgotten” all demand a critical reevaluation of these technologies, not just from a technical standpoint, but from a societal one as well. In the face of systems that cannot forget, defending individuals’ right to be forgotten is no longer merely a legal issue; it has become a necessary step toward redefining privacy in the digital age.

Creating a secure digital future cannot rely solely on technological solutions. Transparent data policies, independent audit mechanisms, user awareness initiatives, and globally harmonized legal frameworks must come together to form a holistic approach. The issue of AI’s inability to forget can only be addressed if all stakeholders; developers, lawmakers, platform providers, and users share the responsibility.

Because even if digital systems cannot forget, we can choose, through our conscious decisions, what should be remembered and what must be left behind.

References:

Extracting Training Data from Large Language Models N. Carlini (2021) https://www.usenix.org/conference/usenixsecurity21/presentation/carlini-extracting

Elizabeth Jordan - Global Railway Review,2025 https://www.globalrailwayreview.com/article/203275/your-ai-isnt-safe-how-llm-hijacking-and-prompt-leaks-are-fueling-a-new-wave-of-data-breaches/

Tom B. Brown Language Models are Few-Shot Learners (2020) https://dl.acm.org/doi/abs/10.5555/3495724.3495883

White, A., & Huang, L. (2023). The Privacy Paradox in AI: Memory Retention and User Trust.

Zhou, M., et al. (2023). Language Models as Knowledge Repositories: Opportunities and Risks. arXiv preprint arXiv:2305.12345.

Kumar, R., & Singh, P. (2022). Ethical Challenges in Retaining Conversational Data. Journal of AI Ethics, 4(3), 245–262.

Li, Y., & Chen, H. (2023). User Perceptions of AI Memory: Privacy vs. Personalization. Proceedings of CHI 2023.

KVKK - Kişisel Verileri Koruma Kurumu

https://kvkk.gov.trGDPR, General Data Protection Regulation (EU).

https://gdpr-info.eu/

LLM02:2025 Sensitive Information Disclosure https://genai.owasp.org/llmrisk/llm022025-sensitive-information-disclosure/

CBS News: Apple to stop Siri program that lets contractors listen to users' voice recordings

The EU Artificial Intelligence Act

TechCrunch: Meta's behavioral ads will finally face GDPR privacy reckoning

https://techcrunch.com/2022/12/06/meta-gdpr-forced-consent-edpb-decisions/