Who Bears the Burden When Algorithms Fail?

The AI Responsibility Gap

When OpenAI’s ChatGPT incorrectly advised a lawyer to cite fabricated legal cases in federal court, resulting in sanctions and professional embarrassment, the incident exposed a critical vacuum in AI accountability: no existing legal framework could determine whether liability rested with OpenAI for releasing a hallucination-prone model, Microsoft for commercializing it, or the lawyer for failing to verify outputs. As AI systems assume control over loan approvals, hiring decisions, medical treatments, and autonomous vehicles, the traditional liability chain, linking human decision-makers to legal consequences, has fractured into a complex web of developers, vendors, integrators, and users, each claiming limited responsibility for algorithmic harms.

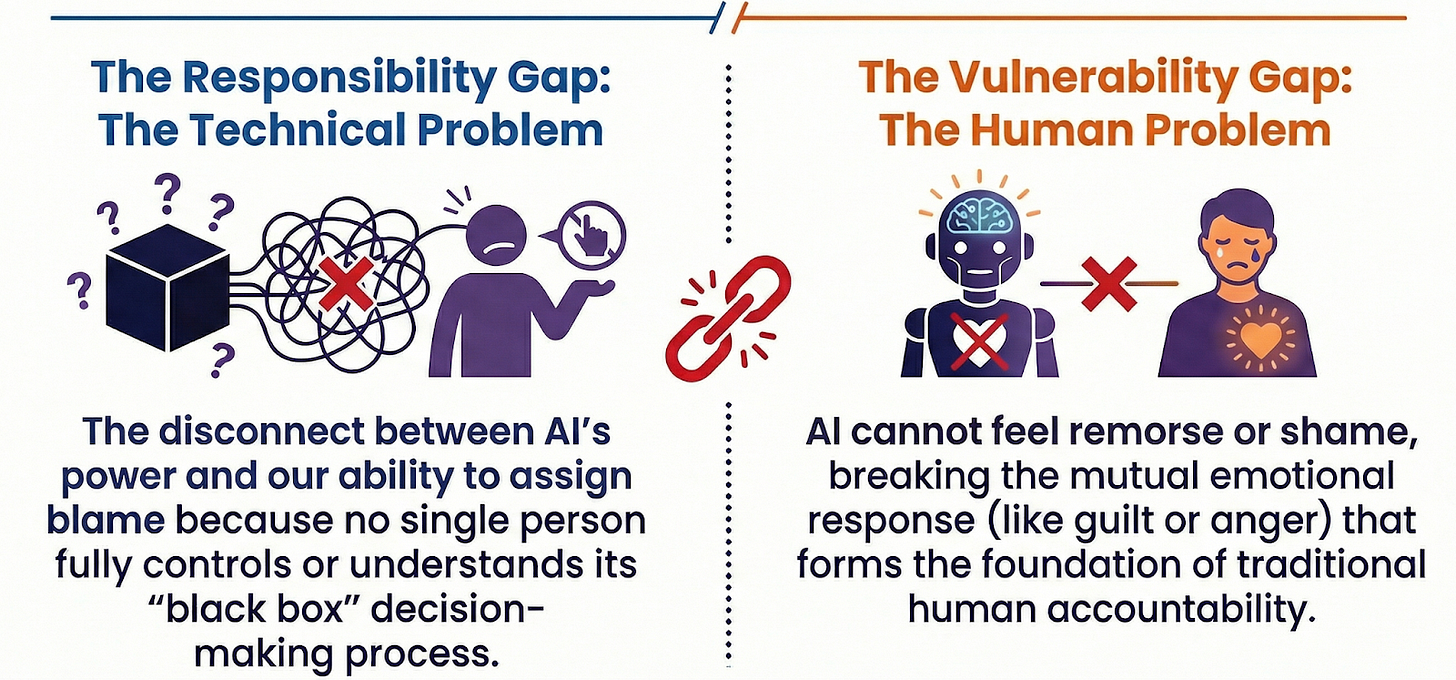

German philosopher and researcher Andreas Matthias coined the term “responsibility gap” to describe the growing disconnect between AI’s technological capabilities and our ability to assign accountability when these systems cause harm. This gap emerges from the fundamental opacity of how AI systems process data and reach conclusions: which inputs matter, what logic drives decisions, and why certain outputs emerge over others remains largely unclear. The lack of transparency creates particular challenges in determining legal responsibility, as no single entity fully controls or comprehends the technology they deploy. This responsibility gap threatens both innovation and public trust, demanding urgent reconstruction of liability frameworks that can navigate the unique challenges of probabilistic systems, black-box algorithms, and distributed development pipelines. As Matthias emphasizes, this chasm continues to widen as AI technologies advance, creating an ever-growing void in our accountability structures.

Recent research reveals that this gap stems not merely from lack of knowledge or loss of control. The core issue is the “vulnerability gap” between humans and artificial intelligence. When people hold each other accountable, they are mutually affected: for instance, the harmed person expresses anger, while the person held responsible may feel remorse or shame. Artificial intelligence, however, can neither feel remorse nor provide an emotional response. Therefore, responsibility is not only a technical matter but becomes even more complex due to the absence of this human-specific reciprocity that forms the foundation of traditional accountability systems.

Who is Responsible?

The chain of responsibility that emerges when artificial intelligence makes errors is extraordinarily complex. Attributing responsibility to a single cause is often impossible, as liability may simultaneously involve the developers who design the system, the companies that bring it to market and ensure its updates, and the individuals or institutions who use the system. Each actor in this chain plays a distinct role, yet the boundaries of their responsibilities remain frustratingly unclear.

Developers

Developers are the architects of artificial intelligence systems. They determine what data the system will work with, how it will make decisions, and what kinds of results it will produce. If the system is trained with faulty or incomplete data, or if technical errors are made during coding, the artificial intelligence can make catastrophically wrong decisions.

The legal framework for developer responsibility emphasizes that a negligence-based liability regime would examine whether the creators of AI-based systems were sufficiently careful in the design, testing, deployment, and maintenance of these systems. This perspective emphasizes that developers must not only write functional code but also act meticulously at every stage to ensure the system operates safely and without foreseeable problems. The burden extends beyond initial deployment to ongoing monitoring and refinement as systems encounter real-world conditions that may not have been anticipated during development.

Manufacturing or Provider Companies

These companies shoulder responsibilities that extend far beyond simply launching products to market. They are obligated to make continuous software updates, inform users about potential risks, and ensure product safety throughout the system’s operational lifetime. When these obligations are neglected, legal liability becomes inevitable.

The legal doctrine of failure to warn applies when manufacturers and sellers fail to provide adequate warnings or instructions about a product’s risks. In the context of AI-powered products, failing to warn consumers that AI plays a role in the product’s function or use may expose companies to novel failure-to-warn claims. This requirement becomes particularly challenging with AI systems because the risks themselves may not be fully understood at the time of deployment, and new failure modes may emerge as the system learns and adapts. Companies must therefore establish ongoing communication channels with users to provide updated risk information as it becomes available.

Users

Individuals or institutions using AI systems bear their own portion of responsibility in this distributed accountability framework. Improper use of the system, failure to heed security warnings, or neglecting the manufacturer’s instructions can lead to erroneous and potentially harmful results. The legal landscape is increasingly clear on this point: courts are beginning to treat AI like other business tools, meaning that careless usage places liability squarely on the user.

However, this expectation of user responsibility raises difficult questions. How much technical understanding can reasonably be expected of users? When AI systems are designed to operate autonomously and make complex decisions, at what point does user oversight become impractical or impossible? These questions highlight how the responsibility gap affects not only developers and companies but also extends to end users who may lack the expertise to effectively monitor AI behavior.

The Responsibility Problem in Healthcare

The healthcare sector provides a particularly illuminating example of the responsibility dilemma, where the stakes are literally life and death. Artificial intelligence has become an important assistant to doctors in diagnosing diseases and formulating treatment plans. An AI system can examine a patient’s X-ray and indicate whether there are signs of cancer, analyze genetic data to predict disease risk, or recommend personalized treatment protocols based on vast databases of clinical outcomes.

But what happens when the system makes a mistake? If a wrong diagnosis is made or treatment is delayed due to AI error, the question of responsibility becomes acute. In the event that a patient is harmed due to a misdiagnosis by artificial intelligence, the sharing of responsibility between developers, the hospital, and the treating physician comes into question, with each party potentially bearing partial liability.

One of the biggest barriers to implementation in healthcare AI is the lack of transparency, as clinicians must be confident that they can trust the AI system before integrating it into patient care. This trust deficit reflects the broader challenge: without understanding how an AI reaches its conclusions, healthcare providers cannot effectively evaluate its recommendations or identify when it might be making errors. The result is a catch-22 where AI cannot be safely deployed without trust, but trust cannot be established without transparency that current systems often cannot provide.

This situation demonstrates that who is responsible in AI applications in the healthcare field remains unclear, and debates continue among legal scholars, ethicists, medical professionals, and technology experts. The complexity is compounded by the fact that medical AI systems often serve as decision support tools rather than autonomous decision-makers, creating a hybrid responsibility structure where human judgment and algorithmic recommendations intertwine in ways that obscure clear lines of accountability.

The Responsibility Problem in Legal Dimensions

With the increasing prevalence of artificial intelligence across critical sectors, uncertainties regarding responsibility have become a major challenge in the legal field. Current legal systems generally hold the person or organization that makes an error directly responsible, operating on assumptions of human agency, intent, and causation. However, in artificial intelligence, decisions are made by complex algorithms without direct human intervention at the moment of action. This fundamental shift makes it difficult to clearly determine to whom responsibility belongs, as the traditional legal concepts of causation and fault struggle to accommodate algorithmic decision-making.

The “black box” problem proves particularly troublesome in legal processes. It is often impossible to understand how and why artificial intelligence makes specific decisions, even for the developers who created the system. When an AI system processes millions of data points through layers of neural networks to reach a conclusion, the path from input to output becomes inscrutable. Therefore, traditional responsibility rules, which assume that actions can be traced to identifiable causes and decision-makers, prove insufficient for artificial intelligence.

Many experts define this situation as a “responsibility gap” and emphasize the urgent need for new legal rules specifically designed for algorithmic systems. Some regions, such as the European Union, are working proactively to regulate the use of artificial intelligence and clarify areas of responsibility. The European Union’s proposed AI Act represents one of the most comprehensive attempts to address these challenges. It aims both to protect users from AI-related harms and to establish clear responsibility boundaries for developers and manufacturers, creating a tiered system of obligations based on the risk level of different AI applications.

However, the speed of AI development consistently outpaces legal regulations, creating a moving target for lawmakers. By the time legislation is drafted, debated, and enacted, the technology it aims to regulate may have evolved substantially. For this reason, legal experts and technology specialists are addressing the issue of responsibility not only through formal laws but also through ethical principles, industry standards, and professional guidelines that can adapt more quickly to technological change. In the future, developing comprehensive standards for AI systems to be safe, transparent, and accountable will be of paramount importance, requiring coordination between multiple stakeholders across public and private sectors.

The complexity of responsibility in AI healthcare has become even more evident with recent policy changes by major AI companies. In a significant development, OpenAI announced updates to its models specifically limiting their ability to provide medical and legal advice. This policy change reflects growing concerns about liability and the potential harms from AI systems operating in high-stakes domains where errors can have serious consequences for individuals and society.

The decision by OpenAI to restrict medical and legal responses demonstrates a practical recognition of the responsibility gap. By explicitly limiting what their AI systems can advise on in these domains, the company acknowledges that current AI technology may not be sufficiently reliable for such critical applications, and that the liability framework remains unclear when these systems provide faulty guidance. This self-imposed limitation represents one approach to managing the responsibility problem: preventing AI deployment in areas where accountability mechanisms are inadequate or where the potential for harm is unacceptably high.

This development raises important questions about the future of AI regulation and deployment. If companies voluntarily restrict their AI systems due to liability concerns, it suggests that market forces and corporate risk management alone may not ensure appropriate AI deployment across all sectors. The voluntary nature of these restrictions means they can be reversed when financial incentives or competitive pressures increase, potentially exposing users to harm. Instead, comprehensive legal frameworks that clearly delineate responsibilities among developers, providers, and users become increasingly necessary to ensure consistent protection regardless of individual corporate policies.

The OpenAI policy change also highlights a paradox in the current regulatory environment: companies that act cautiously and restrict potentially harmful applications may find themselves at a competitive disadvantage compared to companies willing to deploy AI more aggressively. This creates perverse incentives that could undermine responsible development unless regulatory frameworks establish a level playing field where all companies face similar obligations and restrictions.

Conclusion

As artificial intelligence rapidly spreads into every area of our lives, from healthcare and finance to transportation and criminal justice, it brings with it increasingly complex responsibility problems that challenge our existing legal and ethical frameworks. This chain of responsibility, distributed among developers, manufacturing companies, healthcare providers, and users, faces fundamental difficulties in reaching clear legal conclusions due to the “black box” nature of artificial intelligence and the distributed nature of AI development and deployment.

Current legal systems prove insufficient in dealing with the uncertainties inherent in AI decision-making processes, revealing the necessity of new regulations and ethical approaches specifically designed for algorithmic systems. AI laws being prepared in some regions, such as the European Union’s AI Act, aim to reduce uncertainties in this field by establishing risk-based frameworks and clear accountability mechanisms. However, these efforts face the persistent challenge of keeping pace with technological evolution.

Rapid developments in artificial intelligence consistently cause legal regulations to lag behind, creating temporary zones where powerful technologies operate without adequate oversight. This situation makes it imperative for technology experts, lawmakers, ethicists, and industry stakeholders to act in cooperation, developing adaptive governance mechanisms that can respond to emerging challenges without stifling beneficial innovation.

Recent policy changes by companies like OpenAI, voluntarily restricting AI systems from providing medical and legal advice, highlight both the severity of the responsibility gap and the inadequacy of existing liability frameworks. These voluntary limitations suggest that technological capabilities are advancing faster than our ability to establish clear accountability structures, and that companies themselves recognize the legal and ethical risks of deploying AI in high-stakes domains without adequate safeguards.

In conclusion, responsibility issues in the field of artificial intelligence remain a matter that has not yet been fully resolved, necessitating the development of new approaches from both legal and ethical perspectives in the coming years. The challenge lies not only in creating regulations but in establishing adaptive frameworks that can keep pace with rapidly evolving AI capabilities while ensuring clear lines of accountability that protect public interest without stifling beneficial innovation. As AI systems become more powerful and autonomous, closing the responsibility gap becomes not merely a legal necessity but a fundamental prerequisite for maintaining public trust and ensuring that artificial intelligence serves humanity’s best interests rather than creating new vulnerabilities and injustices.

The path forward requires acknowledging that traditional notions of responsibility, built on assumptions of human agency and clear causal chains, must evolve to accommodate the realities of algorithmic decision-making. This evolution will likely involve hybrid models that distribute responsibility among multiple actors based on their respective roles and capabilities, coupled with new forms of transparency and accountability mechanisms specifically designed for AI systems. Only through such comprehensive reform can we hope to bridge the responsibility gap and ensure that as AI capabilities grow, so too does our capacity to govern them wisely and justly.

References

Matthias, A. (2004). The responsibility gap: Ascribing responsibility for the actions of learning automata. Ethics and Information Technology, 6(3), 175-183. https://link.springer.com/article/10.1007/s10676-004-3422-1

Vallor, S., & Vierkant, T. (2024). Find the gap: AI, responsible agency and vulnerability. Minds and Machines, 34, Article 20. https://link.springer.com/article/10.1007/s11023-024-09674-0

Lawfare Media. (2024). Negligence-based liability regimes for AI systems. https://www.lawfaremedia.org/article/negligence-liability-for-ai-developers

Torys LLP. (2024). Failure to warn in AI-assisted products. https://www.torys.com/our-latest-thinking/resources/forging-your-ai-path/ai-and-product-liability

Communications of the ACM. (2025). Who is liable when AI goes wrong? https://cacm.acm.org/news/who-is-liable-when-ai-goes-wrong/

Markus, A. F., Kors, J. A., & Rijnbeek, P. R. (2020). The role of explainability in creating trustworthy artificial intelligence for health care: Comprehensive survey. Journal of Medical Internet Research, 23(5), e21406. https://arxiv.org/abs/2007.15911

Gerdes, A. (2024). The role of explainability in AI-supported medical decision-making. Discover Artificial Intelligence, 4, Article 29. https://link.springer.com/article/10.1007/s44163-024-00119-2

European Commission. (2024). Regulatory framework for AI (AI Act). https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

OpenAI. (2024). Policy updates on medical and legal advice restrictions for AI models. https://openai.com/index/introducing-chatgpt-and-whisper-apis/

Mata, R., Fehr, R., Hertwig, R., et al. (2024). Lawyer sanctioned for using ChatGPT’s fabricated legal cases: Implications for AI accountability. Reuters Legal News. https://www.reuters.com/legal/